Join devRant

Do all the things like

++ or -- rants, post your own rants, comment on others' rants and build your customized dev avatar

Sign Up

Pipeless API

From the creators of devRant, Pipeless lets you power real-time personalized recommendations and activity feeds using a simple API

Learn More

Related Rants

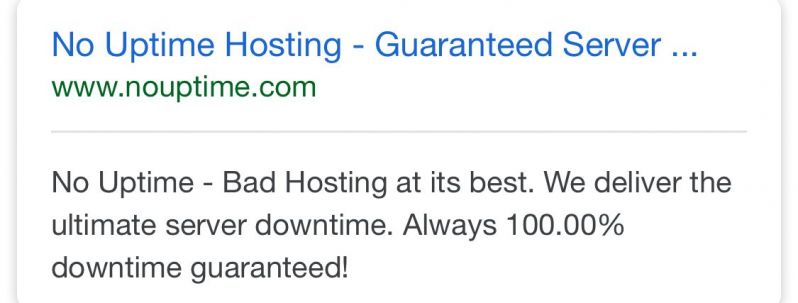

Came across this and wanted to share it with everyone here! Haha

Came across this and wanted to share it with everyone here! Haha

Each month my department compiles a 4M row 150 column data table for compliance with a federal agency. Before submitting, we check it against about 400 rules.

The existing system was simply 400 queries that ran in sequence, table-scanning 4M rows each time, taking upwards of 6 hours, which is a huge bottleneck, especially if you have to make changes and rerun. Plus the output was rather one-dimensional.

I built a proper normalized database and created a sort of rules engine, running all 400 rules in one table scan. Not only does it complete in 30 minutes, but the reports generate automatically, and the results can be filtered on several dimensions to aid with root-cause analysis.

Management was pleased.

rant

wk91